Article by Joel Gurin: “…A growing coalition of organizations, researchers, technologists, and civic leaders is working to save and preserve national data on many levels. Now it’s time to bring those lines of work together. We need a coordinated, national program to protect essential data and build alternatives where federal sources fail.

Such a program can begin by acknowledging that we cannot save everything. Data.gov, the federal portal for all the government’s public data, provides access to more than 400,000 datasets. Not all are equally important, equally used, or equally at risk. The challenge is to identify the most essential datasets—such as the ones that underpin public health, climate science, economic stability, education, and democratic accountability—and determine which are vulnerable.

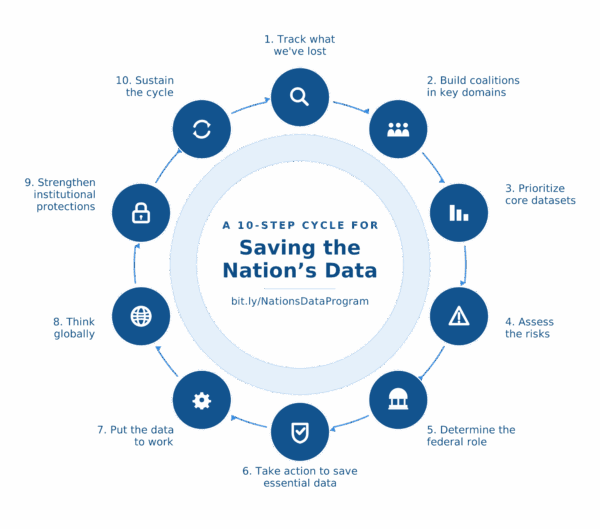

A practical, scalable strategy can include several steps:

1. Track what we’ve lost. We need a thorough, AI-enabled scan of the federal data ecosystem to see what’s already been lost or changed, and set up automated monitoring to detect even subtle changes going forward.

2. Build coalitions in key domains. Public health experts know which datasets matter most to disease surveillance. Climate scientists know which environmental indicators are irreplaceable. Education researchers know which federal surveys track opportunity. These experts must work alongside data scientists, AI specialists, and philanthropic partners to map what truly counts.

3. Prioritize core datasets. Through interviews, surveys, and quantitative analysis—such as tracking citations in research or journalism—coalitions can identify a “core canon” of essential datasets in each field.

4. Assess the risks. Tools like the Data Checkup, developed by dataindex.us, can assess threats to federal datasets. This work can be automated and scaled with AI.

5. Determine the federal role. Some federal data—like satellite observations, national health surveillance, or economic indicators—cannot be replicated by states or private actors. Other data can be supplemented or replaced by state and local sources, private‑sector datasets, crowdsourcing, or nontraditional data sources.

6. Take action to save essential data. When federal data is essential, coalitions can pursue advocacy, public comments, direct engagement with agencies, or litigation. When alternatives exist, they can be developed, benchmarked, and scaled.

7. Put the data to work. The best way to defend data is to use it. Publishing use cases, visualizations, tools, and plain‑language insights helps the public see why this information matters. Generative AI can make federal and open data accessible to millions of non‑technical users.

8. Think globally. The threats to data go beyond the U.S. We need to track the international impacts of U.S. data loss, study how international sources might replace U.S. data, and share lessons learned with other countries.

9. Strengthen institutional protections. In addition to managing today’s immediate problems, we need to develop policies, laws, governance strategies, and guardrails for more stable, reliable data in the future.

10. Sustain the cycle. The threats will evolve. So must the response…(More)”.