Stefaan Verhulst

Report by Luca Picci and Jorge Rivera: “The development community is driving down a winding road while looking in the rearview mirror. Official statistics tell us where official development assistance (ODA) stood a year ago, with precision and authority. But they don’t tell us the road has turned until after the fact.

That’s a problem. It means that programme decisions, policy responses, and advocacy strategies are being made on the basis of incomplete or outdated data. In 2025, the four largest Development Assistance Committee (DAC) donors—the United States, Germany, the United Kingdom, and France—all cut ODA. Initial projections estimated that ODA would fall by between 9% and 17% in 2025 [1]; preliminary ODA figures published in April 2026 suggest that ODA fell by 23.1% [2]. Official detailed data for the 2025 cuts, disaggregated by donor, sector, and recipient, will not be available until December 2026, however, and detailed data on 2026 ODA will not be available until late 2027.

Nowcasting methods have been developed to address this problem. They estimate the current value of a lagged official statistic using data published at a higher frequency, updating estimates as new information arrives, and quantifying uncertainty. Nowcasting provides a blurred view through the windshield; still not a clear picture of the road, but enough to spot the turn.

This paper reviews the ODA data landscape and existing nowcasting approaches, and assesses which merit further investigation in the context of ODA. Our conclusion is that a nowcasting system for ODA is within methodological reach. Such a system could produce estimates that get updated as new information arrives, ahead of official data publication. Initially, this system would estimate ODA at the donor level for major DAC donors, with finer disaggregation contingent on what the available data can support…(More)”.

Discussion Paper by the World Health Organization: “Artificial intelligence (AI) is increasingly shaping evidence-informed policy-making (EIP) in health by enabling faster analysis, synthesis and use of large and diverse data sources across the policy cycle. This discussion paper examines the intersection of AI and EIP, outlining how AI can support problem identification, policy design and implementation through enhanced data integration, predictive modelling, scenario simulation and adaptive feedback. It emphasizes that AI augments rather than replaces human judgement, while highlighting its potential to expand the evidence base and support more timely, responsive and iterative decision-making in complex health contexts. The paper analyses key risks and challenges associated with AI integration, including bias, opacity, equity concerns, data governance and regulatory gaps, and considers how these may affect different stages of the policy cycle. It reviews relevant governance frameworks and identifies areas of alignment between EIP and AI governance traditions. It further proposes practical considerations for responsible implementation, including human oversight, multidisciplinary collaboration, living evidence approaches and risk-based regulation. Intended for policy-makers, regulators and health stakeholders, the document provides a structured basis for leveraging AI to strengthen policy processes, improve health outcomes and maintain public trust…(More)”.

Book by Helen Pearson: “Today, more and more people around the globe are using scientific evidence to figure out what works—in health, government and business as well as conservation, schools and parenting. This wasn’t always the case. This book tells the story of the evidence revolution—a worldwide movement that promotes evidence-based thinking—and shows how it can help us all, especially in an age of alternative facts.

For many years, most medical advice was based on doctors’ opinions and conventional wisdom, not solid science. Helen Pearson describes how evidence-based medicine swept the world in the 1990s—becoming the predominant form of medicine practiced today—and how the idea that evidence should guide decisions is quietly transforming a host of other fields as well. Do police patrols reduce crime? Do performance appraisals boost job performance? Do welfare programs help the poor? Do smaller classes aid learning? Do smartphones harm teenagers? At a time when science is under attack and questionable claims run rampant, Pearson underscores the importance of evidence in all facets of our lives, empowering each of us to sift fact from falsehood and misinformation from the truth…(More)”.

Article by the Department for Enterprise (Isle of Man): “The legislation establishes the statutory framework for Data Asset Foundations, enabling data to be recognised, governed and managed as an asset within a clear legal structure.

Built on the Island’s existing Foundations Act 2011, it creates a new capability for businesses and marks a defining milestone in the Island’s wider digital and economic development. It establishes a world-first statutory framework to recognise data as an asset, placing the Isle of Man at the forefront of how data is treated within the global economy.

The initiative has been developed by Digital Isle of Man as part of the Island’s long-term Economic Strategy to leverage the Island’s strengths in regulation and security and offer a unique proposition for data businesses.

As data becomes increasingly central to global economic activity, organisations are looking for trusted and practical ways to govern and use it. By putting the legal foundations in place now, the Isle of Man is creating the conditions for new forms of economic activity, investment and high-value jobs across technology, professional services and data-led sectors.

In practical terms, this opens up new commercial opportunities, from data valuation and licensing to new fiduciary and assurance services, supporting both existing businesses and new entrants to the Island.

Businesses will be able to use the Data Asset Foundations framework to securely share data with partners without losing control of it, or to demonstrate its value as part of raising investment, creating new pathways for growth while maintaining strong governance.

For the Isle of Man, this is about creating new sources of economic growth, supporting high-value jobs and ensuring the Island remains competitive as global markets continue to evolve, while reinforcing its reputation as a trusted and well-regulated jurisdiction….More information about Data Asset Foundations is available at: www.digitalisleofman.com/data-asset-foundations..(More)”

About: “An open-source toolkit for comparing and conflating Points of Interest (POIs) across major geospatial datasets.

OpenPOIs downloads current US-wide POI snapshots from multiple publicly available sources — currently OpenStreetMap and Overture Maps — and conflates them into a single unified dataset. The web map lets you explore each source side by side. Each POI in the conflated dataset is given a confidence score, which is the probability that the POI currently exists based on available data from both sources…(More)”.

Article by Tiago C. Peixoto: “…For over a decade, those focused on the demand side of open data paid, and rightly so, lots of attention on who would use the data, and how. AI solves the demand-side problem. But the moment you build the agent and point it at real government data, you discover a supply-side problem that was always there but never fully exposed. The techno-mediator bottleneck was masking it. When only a handful of skilled developers and data journalists could query government APIs, the partial nature of the data caused limited damage. The few who did the query had enough domain expertise to cross-reference. AI removes that containment. If millions of citizens can now query budget data through AI agents, and the data systematically undercounts by a factor of five, the result is not accountability at scale. It is misinformation at scale, laundered through the authority of clean data and confident AI responses.

To be clear: the open data movement never assumed the data was already “out there.” The whole point was to advocate for its release. The problem came after. When governments did start publishing, the shortage of people who could query and assess the data meant that its quality went, in many cases, largely unexamined. The mediation failure that reduced the usefulness of open data for accountability purposes also made it less useful for quality control. If almost nobody can check whether a budget API returns 20% or 100% of the real figures, governments face no cost for publishing incomplete extracts. The very conditions that weakened the demand side gave the supply side room to underdeliver, and to receive credit for it. Rather than a communication trick, openwashing was an architectural possibility created by the absence of capable users. And it was sustained by an institutional environment in which there was no requirement that a public-facing API reconcile with the government’s full internal financial records, no audit of coverage, and no penalty for publishing a clean but partial extract…(More)”.

Article by Matthew Gault: “Researchers working with data from the Internet Archive have discovered that a third of websites created since 2022 are AI-generated. The team of researchers—which includes people from Stanford, the Imperial College London, and the Internet Archive—published their findings online in a paper titled “The Impact of AI-Generated Text on the Internet.” The research also found that all this AI-generated text is making the web more cheery and less verbose. Inspired by the Dead Internet Theory—the idea that much of the internet is now just bots talking back and forth—the team set out to find out how ChatGPT and its competitors had reshaped the internet since 2022. “The proliferation of AI-generated and AI-assisted text on the internet is feared to contribute to a degradation in semantic and stylistic diversity, factual accuracy, and other negative developments,” the researchers write in the paper. “We find that by mid-2025, roughly 35% of newly published websites were classified as AI-generated or AI-assisted, up from zero before ChatGPT’s launch in late 2022…(More)”.

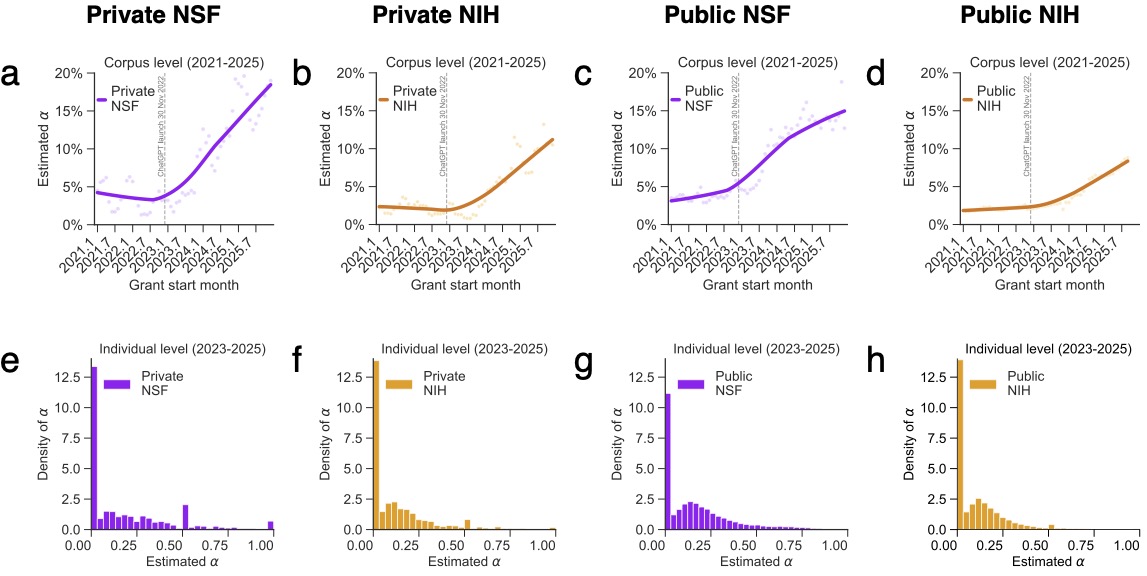

Article by Northwestern Innovation Institute: “Federal agencies such as the NIH and the National Science Foundation help determine which scientific ideas receive public support, which researchers are able to pursue ambitious work and which fields gain momentum. Because those decisions shape the future direction of discovery, even subtle shifts in how proposals are written, evaluated and selected can have lasting effects across the research ecosystem.

Yet while large language models such as ChatGPT have rapidly entered classrooms, offices and laboratories, far less attention has been paid to how they may be influencing the grant process itself. Proposal writing is often one of the most time-consuming parts of academic life, and AI tools can reduce that burden by helping draft language, summarize prior work and improve organization.

To examine how those tools may already be affecting funding outcomes, researchers at Northwestern Innovation Institute analyzed confidential proposal submissions from two major U.S. research universities together with the full population of publicly released NIH and NSF awards from 2021 through 2025. The combined dataset —made possible in part through Bridge, a collaborative initiative at the Innovation Institute that integrates research, funding and innovation data across partner institutions — offered a rare window into both funded and unfunded proposals at the earliest stage of the research pipeline.

Signs of AI-assisted writing rose sharply beginning in 2023, shortly after generative AI tools became widely available. At NIH, proposals with higher levels of AI involvement were more likely to receive funding and went onto produce more publications. But that productivity gain came with an important qualifier: the additional output was concentrated in ordinary papers rather than the most highly cited work. AI-assisted grants produced more research, but not necessarily more breakthroughs.

Across both agencies, proposals with stronger AI signals also tended to be less distinctive from recently funded work. Crucially, the study found this reflects genuine shifts in what researchers are proposing, not merely how they are writing — when the researchers held scientific content constant and appliedAI rewriting to existing abstracts, the semantic position of those proposals barely changed. The convergence is happening at the level of ideas.

These findings directly address both open questions. The productivity gains — more publications, but not more breakthroughs, and only at NIH, suggest that AI is primarily lowering the cost of communication rather than accelerating scientific execution. And by observing confidential, unfunded proposals alongside funded awards, the study shows that AI’s influence is already operating upstream, reshaping how ideas are articulated and positioned before they ever reach publication…(More)”.

Paper by Kimitaka Asatani et al: “Intergovernmental organizations (IGOs) attempt to shape global policy through scientific guidelines and assessments. While they rely on external scientists to bridge research and IGO advisory processes, the structural pathways connecting science to IGO documents remain unexamined. By linking 230,737 scientific papers referenced in IGO documents (2015–2023) to their authors and coauthorship networks across 23 research fields, we identified a small cohort of “Highly IGO-Cited Scientists” (HIC-Sci)—typically comprising 0.7% to 4.4% of authors whose work accounts for 30% of IGO-cited papers. This structural concentration is associated with relational and cognitive patterns: dense transnational collaboration networks, overlapping memberships on advisory bodies such as the Intergovernmental Panel on Climate Change, rapid uptake in IGO documents, and standardized policy-oriented vocabularies. Geographically, HIC-Sci networks follow a core–periphery structure centered on Western Europe. Established fields, such as climate modeling, show stronger concentration, whereas emerging domains such as data science & AI show more distributed citation patterns. Major IGOs frequently cocite the same HIC-Sci papers, compounding this concentration through synchronized diffusion across IGOs. This concentration persists despite IGOs’ efforts to broaden participation and diversify their evidence base. While IGOs have developed criteria for selecting knowledge in advance, our framework provides a basis for subsequent assessment of how IGOs’ efforts to influence policy rely on a concentrated set of HIC-Sci…(More)”.

Article by James W Kelly: “Medical information of 500,000 participants of one of the UK’s landmark scientific programmes, UK Biobank, were offered for sale online in China, the government has confirmed.

Technology minister Ian Murray said information of all members of the database was found listed for sale on the website Alibaba.

Murray told MPs the charity which runs UK Biobank had told the government about the breach on Monday. He said the information did not include names, addresses, contact details or telephone numbers.

However he said it could include gender, age, month and year of birth, socioeconomic status, lifestyle habits, and measures from biological samples.

The Biobank is a collection of health data offered by volunteers which has been used to help improvements in detection and treatment of dementia, some cancers and Parkinson’s.

It has collected intimate details – including whole body scans, DNA sequences and their medical records – from hundreds of thousands of volunteers for over two decades. The project has led to more than 18,000 scientific publications.

Participants were aged from 40 to 69 when they were recruited between 2006 and 2010.

UK Biobank said it was investigating the incident and thanked the UK and Chinese governments, as well as Alibaba, for support and cooperation…(More)”.