Stefaan Verhulst

Paper by O.B Leal-Neto et al: “Participatory surveillance has shown promising results from its conception to its application in several public health events. The use of a collaborative information pathway provides a rapid way for the data collection on symptomatic individuals in the territory, to complement traditional health surveillance systems. In Brazil, this methodology has been used at the national level since 2014 during mass gatherings events since they have great importance for monitoring public health emergencies.

With the occurrence of the COVID-19 pandemic, and the limitation of the main non-pharmaceutical interventions for epidemic control – in this case, testing and social isolation – added to the challenge of existing underreporting of cases and delay of notifications, there is a demand on alternative sources of up to date information to complement the current system for disease surveillance. Several studies have demonstrated the benefits of participatory surveillance in coping with COVID-19, reinforcing the opportunity to modernize the way health surveillance has been carried out. Additionally, spatial scanning techniques have been used to understand syndromic scenarios, investigate outbreaks, and analyze epidemiological risk, constituting relevant tools for health management. While there are limitations in the quality of traditional health systems, the data generated by participatory surveillance reveals an interesting application combining traditional techniques to clarify epidemiological risks that demand urgency in decision-making. Moreover, with the limitations of testing available, the identification of priority areas for intervention is an important activity in the early response to public health emergencies. This study aimed to describe and analyze priority areas for COVID-19 testing combining data from participatory surveillance and traditional surveillance for respiratory syndromes….(More)”.

Paper by Thibault Schrepel: “One may identify two current trends in the field of “Law and Technology.” The first trend concerns technological determinism. Some argue that technology is deterministic: the state of technological advancement is the determining factor of society. Others oppose that view, claiming it is the society that affects technology. The second trend concerns technological neutrality. some say that technology is neutral, meaning the effects of technology depend entirely on the social context. Others defend the opposite: they view the effects of technology as being inevitable (regardless of the society in which it is used).

While it is commonly accepted that technology is deterministic, I am under the impression that a majority of “Law and Technology” scholars also believe that technology is non-neutral. It follows that, according to this dominant view, (1) technology drives society in good or bad directions (determinism), and that (2) certain uses of technology may lead to the reduction or enhancement of the common good (non-neutrality). Consequently, this leads to top-down tech policies where the regulator has the impossible burden of helping society control and orient technology to the best possible extent.

This article is deterministic and non-neutral.

But, here’s the catch. Most of today’s doctrine focuses almost exclusively on the negativity brought by technology (read Nick Bostrom, Frank Pasquale, Evgeny Morozov). Sure, these authors mention a few positive aspects, but still end up focusing on the negative ones. They’re asking to constrain technology on that sole basis. With this article, I want to raise another point: technology determinism can also drive society by providing solutions to centuries-old problems. In and of itself. This is not technological solutionism, as I am not arguing that technology can solve all of mankind’s problems, but it is not anti-solutionism either. I fear the extremes, anyway.

To make my point, I will discuss the issue addressed by Albert Hirschman in his famous book Exit, Voice, and Loyalty (Harvard University Press, 1970). Hirschman, at the time Professor of Economics at Harvard University, introduces the distinction between “exit” and “voice.” With exit, an individual exhibits her or his disagreement as a member of a group by leaving the group. With voice, the individual stays in the group but expresses her or his dissatisfaction in the hope of changing its functioning. Hirschman summarizes his theory on page 121, with the understanding that the optimal situation for any individual is to be capable of both “exit” and “voice“….(More)”.

Paper by Scheel, Anne M., Leonid Tiokhin, Peder M. Isager, and Daniel Lakens: “For almost half a century, Paul Meehl educated psychologists about how the mindless use of null-hypothesis significance tests made research on theories in the social sciences basically uninterpretable (Meehl, 1990). In response to the replication crisis, reforms in psychology have focused on formalising procedures for testing hypotheses. These reforms were necessary and impactful. However, as an unexpected consequence, psychologists have begun to realise that they may not be ready to test hypotheses. Forcing researchers to prematurely test hypotheses before they have established a sound ‘derivation chain’ between test and theory is counterproductive. Instead, various non-confirmatory research activities should be used to obtain the inputs necessary to make hypothesis tests informative.

Before testing hypotheses, researchers should spend more time forming concepts, developing valid measures, establishing the causal relationships between concepts and their functional form, and identifying boundary conditions and auxiliary assumptions. Providing these inputs should be recognised and incentivised as a crucial goal in and of itself.

In this article, we discuss how shifting the focus to non-confirmatory research can tie together many loose ends of psychology’s reform movement and help us lay the foundation to develop strong, testable theories, as Paul Meehl urged us to….(More)”

Blog by Katherine Flaschen and Ben Castleman: “In order to create the most effective solutions, policymakers and educators need to better understand a fundamental question: Why do so many of these students, many of whom have already made substantial progress toward their degree, fail to return to college and graduate? …

With a better understanding of the barriers preventing people who intend to finish their degree from following through, policymakers and colleges can create solutions that meaningfully meet students’ needs and help them re-enroll. As states across the country face rising unemployment rates, it’s critical to design and test interventions that address these behavioral barriers and help thousands of citizens who are out of work due to the COVID-19 crisis consider their options for going back to school.

For example, colleges could provide monetary incentives to students for taking actions related to re-enrollment that overcome these barriers, such as speaking with an advisor, reviewing upcoming recommended courses and developing a course plan, and making an active choice about when to return to college. In addition, SCND students could be paired with current students to serve as peer mentors, both to provide support with the re-enrollment process and to hold them accountable for degree completion (especially if faced with difficult remaining classes). Community colleges could also encourage major employers of the SCND population in high-demand fields, like health care, to provide options for employees to finish their degree while working (e.g., via tuition reimbursement programs), translate degree attainment into concrete career returns, and identify representatives within the company, such as recent graduates, to promote re-enrollment and make it a more salient opportunity….(More)”.

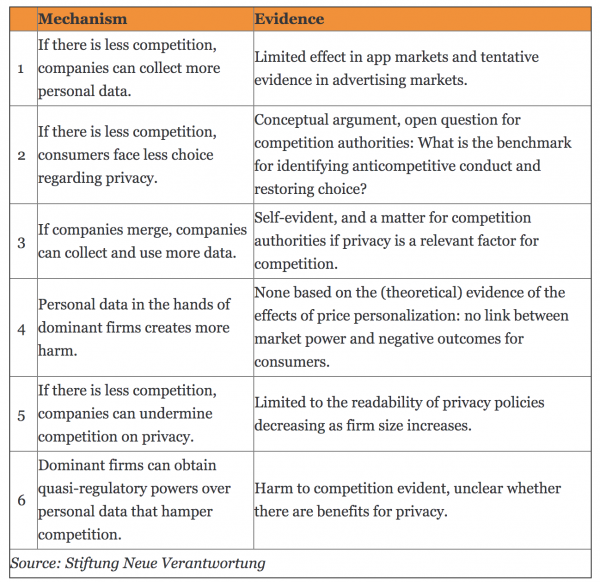

Paper by Aline Blankertz: “A small number of large digital platforms increasingly shape the space for most online interactions around the globe and they often act with hardly any constraint from competing services. The lack of competition puts those platforms in a powerful position that may allow them to exploit consumers and offer them limited choice. Privacy is increasingly considered one area in which the lack of competition may create harm. Because of these concerns, governments and other institutions are developing proposals to expand the scope for competition authorities to intervene to limit the power of the large platforms and to revive competition.

The first case that has explicitly addressed anticompetitive harm to privacy is the German Bundeskartellamt’s case against Facebook in which the authority argues that imposing bad privacy terms can amount to an abuse of dominance. Since that case started in 2016, more cases deal with the link between competition and privacy. For example, the proposed Google/Fitbit merger has raised concerns about sensitive health data being merged with existing Google profiles and Apple is under scrutiny for not sharing certain personal data while using it for its own services.

However, addressing bad privacy outcomes through competition policy is effective only if those outcomes are caused, at least partly, by a lack of competition. Six distinct mechanisms can be distinguished through which competition may affect privacy, as summarized in Table 1. These mechanisms constitute different hypotheses through which less competition may influence privacy outcomes and lead either to worse privacy in different ways (mechanisms 1-5) or even better privacy (mechanism 6). The table also summarizes the available evidence on whether and to what extent the hypothesized effects are present in actual markets….(More)”.

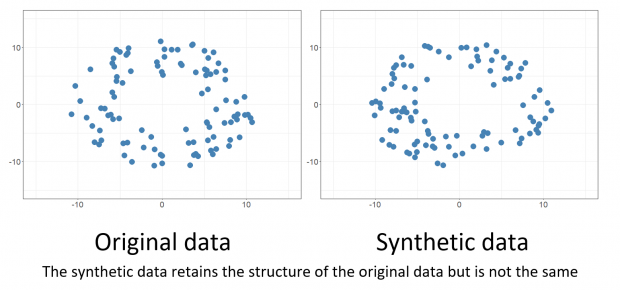

Karen Walker at Gov.UK: “Defence generates and holds a lot of data. We want to be able to get the best out of it, unlocking new insights that aren’t currently visible, through the use of innovative data science and analytics techniques tailored to defence’s specific needs. But this can be difficult because our data is often sensitive for a variety of reasons. For example, this might include information about the performance of particular vehicles, or personnel’s operational deployment details.

It is therefore often challenging to share data with experts who sit outside the Ministry of Defence, particularly amongst the wider data science community in government, small companies and academia. The use of synthetic data gives us a way to address this challenge and to benefit from the expertise of a wider range of people by creating datasets which aren’t sensitive. We have recently published a report from this work….(More)”.

Book edited by Maggie Walter, Tahu Kukutai, Stephanie Russo Carroll and Desi Rodriguez-Lonebear: “This book examines how Indigenous Peoples around the world are demanding greater data sovereignty, and challenging the ways in which governments have historically used Indigenous data to develop policies and programs.

In the digital age, governments are increasingly dependent on data and data analytics to inform their policies and decision-making. However, Indigenous Peoples have often been the unwilling targets of policy interventions and have had little say over the collection, use and application of data about them, their lands and cultures. At the heart of Indigenous Peoples’ demands for change are the enduring aspirations of self-determination over their institutions, resources, knowledge and information systems.

With contributors from Australia, Aotearoa New Zealand, North and South America and Europe, this book offers a rich account of the potential for Indigenous data sovereignty to support human flourishing and to protect against the ever-growing threats of data-related risks and harms….(More)”.

Book edited by Markus D. Dubber, Frank Pasquale, and Sunit Das: “This volume tackles a quickly-evolving field of inquiry, mapping the existing discourse as part of a general attempt to place current developments in historical context; at the same time, breaking new ground in taking on novel subjects and pursuing fresh approaches.

The term “A.I.” is used to refer to a broad range of phenomena, from machine learning and data mining to artificial general intelligence. The recent advent of more sophisticated AI systems, which function with partial or full autonomy and are capable of tasks which require learning and ‘intelligence’, presents difficult ethical questions, and has drawn concerns from many quarters about individual and societal welfare, democratic decision-making, moral agency, and the prevention of harm. This work ranges from explorations of normative constraints on specific applications of machine learning algorithms today-in everyday medical practice, for instance-to reflections on the (potential) status of AI as a form of consciousness with attendant rights and duties and, more generally still, on the conceptual terms and frameworks necessarily to understand tasks requiring intelligence, whether “human” or “A.I.”…(More)”.

Book edited by Corneliu Bjola and Ruben Zaiotti: “This book examines how international organisations (IOs) have struggled to adapt to the digital age, and with social media in particular.

The global spread of new digital communication technologies has profoundly transformed the way organisations operate and interact with the outside world. This edited volume explores the impact of digital technologies, with a focus on social media, for one of the major actors in international affairs, namely IOs. To examine the peculiar dynamics characterising the IO–digital nexus, the volume relies on theoretical insights drawn from the disciplines of International Relations, Diplomatic Studies, Media, and Communication Studies, as well as from Organisation Studies.

The volume maps the evolution of IOs’ “digital universe” and examines the impact of digital technologies on issues of organisational autonomy, legitimacy, and contestation. The volume’s contributions combine engaging theoretical insights with newly compiled empirical material and an eclectic set of methodological approaches (multivariate regression, network analysis, content analysis, sentiment analysis), offering a highly nuanced and textured understanding of the multifaceted, complex, and ever-evolving nature of the use of digital technologies by international organisations in their multilateral engagements….(More)”.

Essay by Geoff Shullenberger: “How 2010’s digital utopians became 2020’s tech prophets of doom…In June 2009, large protests broke out in Iran in the wake of a disputed election result. The unrest did not differ all that much from comparable episodes that had occurred elsewhere in the world over the preceding decades, but many Western observers became convinced that new digital platforms like Twitter and Facebook were propelling the movement. By the time the Arab Spring kicked off with an anti-government uprising in Tunisia the following year, the belief had become widespread that social media was fomenting insurgencies for liberalization in authoritarian regimes.

The most vigorous dissenter from this cheerful consensus was technology critic Evgeny Morozov, whose 2011 book The Net Delusion inveighed against the “cyber-utopianism” then common among academics, bloggers, journalists, activists, and policymakers. For Morozov, cyber-utopians were captive to a “naïve belief in the emancipatory nature of online communication that rests on a stubborn refusal to acknowledge its downside…(More)”.