Paper by Eric Wu et al: “Medical artificial-intelligence (AI) algorithms are being increasingly proposed for the assessment and care of patients. Although the academic community has started to develop reporting guidelines for AI clinical trials, there are no established best practices for evaluating commercially available algorithms to ensure their reliability and safety. The path to safe and robust clinical AI requires that important regulatory questions be addressed. Are medical devices able to demonstrate performance that can be generalized to the entire intended population? Are commonly faced shortcomings of AI (overfitting to training data, vulnerability to data shifts, and bias against underrepresented patient subgroups) adequately quantified and addressed?

In the USA, the US Food and Drug Administration (FDA) is responsible for approving commercially marketed medical AI devices. The FDA releases publicly available information on approved devices in the form of a summary document that generally contains information about the device description, indications for use, and performance data of the device’s evaluation study. The FDA has recently called for improvement of test-data quality, improvement of trust and transparency with users, monitoring of algorithmic performance and bias on the intended population, and testing with clinicians in the loop. To understand the extent to which these concerns are addressed in practice, we have created an annotated database of FDA-approved medical AI devices and systematically analyzed how these devices were evaluated before approval. Additionally, we have conducted a case study of pneumothorax-triage devices and found that evaluating deep-learning models at a single site alone, which is often done, can mask weaknesses in the models and lead to worse performance across sites.

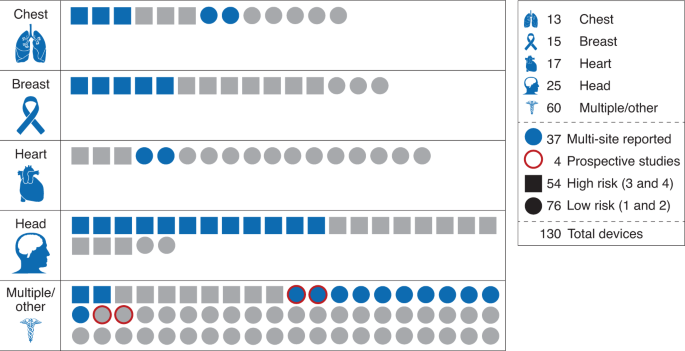

Fig. 1: Breakdown of 130 FDA-approved medical AI devices by body area.

Devices are categorized by risk level (square, high risk; circle, low risk). Blue indicates that a multi-site evaluation was reported; otherwise, symbols are gray. Red outline indicates a prospective study (key, right margin). Numbers in key indicate the number of devices with each characteristic….(More)”.