Stefaan Verhulst

Article by Kinling Lo: “For decades, the pilgrimage route for ambitious tech founders, investors, and engineers led to Silicon Valley. Now, a growing number of them are flying to Shanghai, Hangzhou, or Shenzhen instead. China is seeing a surge in a new kind of tech tourism where visitors pay up to $9,000 for curated tours of electric-vehicle factories, robotaxis, and artificial intelligence and robotics companies. The trend is partially triggered by viral videos of China’s dancing humanoid robots and flying cars, creating a sense that the country may be moving faster than the West in key emerging technologies. As China-U.S. tech competition intensifies, these trips are also becoming a means to explore the next investment opportunity and tech breakthrough.

“There’s a fear-of-missing-out dynamic at play: the sense that China’s tech ecosystem has reached a level of sophistication where not seeing it firsthand puts you at an informational disadvantage relative to competitors who have,” Shaoyu Yuan, an international relations adjunct professor at New York University who specializes in China’s soft power, told Rest of World…(More)”.

Paper by Lorenzo Gabrielli, Patrizia Sulis, Sara Thabit and Marco Minghini: “Open, non-governmental building datasets have become increasingly important for urban analysis, exposure modelling, and policy support. Despite their growing use, little is known about the consistency, completeness, and comparability of the semantic information they provide at a continental scale. This study presents the first systematic comparison of the semantic attributes of six major pan-European open building datasets—OpenStreetMap, EUBUCCO, Microsoft Global ML Building Footprints, Overture Maps, GHS-OBAT, and the Digital Building Stock Model (DBSM)—using the 27 EU Member States as a common reference area. Five key semantic attributes (height, typology, building age, number of floors, and building material) were harmonised and analysed in terms of completeness and value distributions across countries and degrees of urbanisation. The workflow combines API-based data ingestion, distributed geospatial processing, and high-performance computing to handle around 1.250 billion building footprints. Results reveal pronounced heterogeneity in semantic content across datasets. Remote-sensing-derived products (GHS-OBAT and DBSM) exhibit the highest levels of attribute completeness for height, typology, and building age, but rely on aggregated or coarse semantic representations. In contrast, community-driven and conflated datasets (OpenStreetMap and Overture Maps) provide richer and more detailed semantic schemas, albeit with low and spatially uneven completeness. Completeness patterns vary substantially across countries and urbanisation classes, and high completeness values often mask limited semantic informativeness due to the prevalence of unknown or aggregated attribute values. Overall, the findings demonstrate that no single dataset is universally optimal regarding consistency and completeness of building footprints’ semantic attributes. Nonetheless, the paper provides practical guidance for selecting suitable data sources depending on spatial scale, attribute requirements, and analytical objectives…(More)”.

Article by Stefaan Verhulst and Begoña G. Otero: “The European Commission’s Technological Sovereignty Package (IP/26/1187) marks an important moment in the global political economy of the digital age. Presented by Commission President Ursula von der Leyen as an existential imperative for protecting critical infrastructure, the initiative signals an increasingly assertive European response to a rapidly changing geopolitical landscape. Through proposed initiatives such as the Chips Act 2.0, the Cloud and AI Development Act, the Open Source Strategy, and the Strategic Roadmap for Digitalisation and AI in Energy, Brussels has made clear that digital infrastructure is no longer viewed merely as an engine of economic growth but as a strategic asset central to security, competitiveness, and geopolitical influence.

Together, these measures seek to strengthen Europe’s position across the full digital value chain: expanding domestic semiconductor production and advanced chip design capabilities; tripling Europe’s data center capacity over the coming five to seven years; accelerating the deployment of cloud and AI infrastructure; scaling the adoption of artificial intelligence through a network of Experience and Acceleration Centres (AI Factories); promoting open-source alternatives in cloud, AI, cybersecurity, internet technologies, and semiconductors; and integrating digital infrastructure more directly into Europe’s energy system.

Yet technological sovereignty should not be understood as an end in itself. The ultimate objective cannot simply be to manufacture more chips, build more data centers, or host more AI models within European borders. Rather, it should be to ensure that individuals, communities, businesses, and public institutions have meaningful agency over the digital systems that increasingly shape economic opportunity, democratic participation, cultural expression, and public life. Viewed through this lens, the debate around technological sovereignty is fundamentally a debate about digital self-determination: who has the ability to shape the digital systems upon which society depends, under what conditions, and for whose benefit. What follows, then, is that tackling asymmetry by creating new asymmetries is not a desirable outcome. A sovereignty that simply transfers concentrated power from foreign to domestic hands, or that substitutes one set of gatekeepers for another, would resolve the geopolitical problem while reproducing the democratic one. How an infrastructure distributes powers is not fixed by the technology itself but by the institutions and rules built around it: concentration is a choice, not an inevitability. What matters then is not who holds power over digital systems but whether that power is distributed, accountable, and open to challenge.

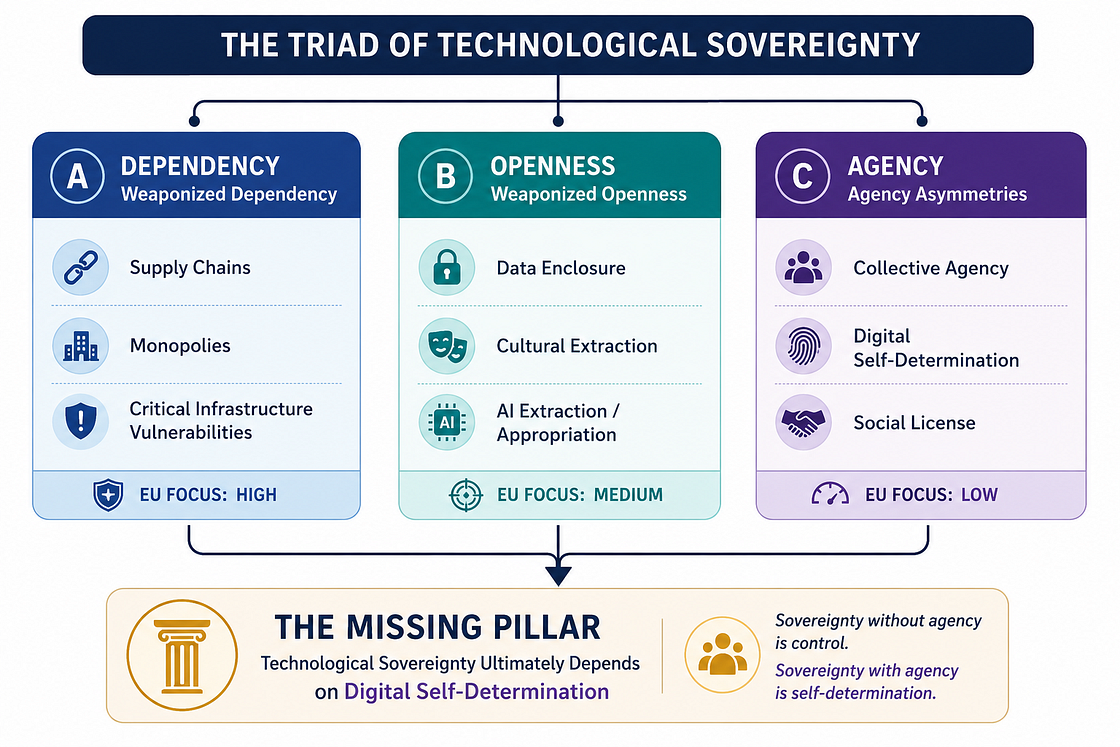

The Triad of Tech Sovereignty

As the global debate over technological sovereignty intensifies, this quest is increasingly unfolding across three interconnected dimensions (what we call the “Triad of Tech Sovereignty”):

- the weaponization of structural dependency, where reliance on foreign infrastructure becomes vulnerability;

- the weaponization of digital openness, where access and interoperability become mechanisms for extraction; and

- systemic asymmetries of agency, where those most affected by decisions and technical systems often have the least say.

While Europe’s latest initiatives offer substantial responses to the first dimension and growing attention to the second, the third is noticeably misaligned. By prioritizing the hard infrastructure of sovereignty over the democratic imperative of self-determination, Europe risks building an impressive industrial fortress without securing the social foundations required to sustain it…(More)”.

Book by Andrew Lison: “Since the end of the Second World War, we have come to expect continual growth in computing power and the rapid development of digital technology. This dynamic has enabled informational procedures to supplant an ever-increasing range of human and mechanical activity. However, indications that the semiconductor industry is approaching the physical limits of integrated circuitry pose an existential challenge to Intel Corporation cofounder Gordon Moore’s “law,” which prescribes an exponential increase in microchip density—and, by extension, processing performance—every two years.

Placing theories of employment in dialectical conjunction with the concrete operations of computing, 100% Utilization explores the consequences of pushing processing power to its limits for a culture seemingly reliant on automation as much as human labor. In accounting for this contradiction, Andrew Lison offers a corrective to theories of digital mediation, emphasizing its symbolic and representational capabilities. He connects the looming end of Moore’s law to trends in semiconductor manufacturing, custom hardware, and parallelized software techniques, including AI. Ultimately, he traces this historical technological boom and impending bust through the racialized history of Silicon Valley to longer-term conceptions of the relationship between machinery and labor…(More)”.

Book by James M. Tabor: “In 1854, the American entrepreneur Cyrus Field set out to lay a 2,000-mile telegraph cable across the bottom of the Atlantic Ocean. Nothing like it had ever been attempted. Field knew nothing about telegraphy, electricity, ships, or oceans, and science itself still lacked a universal theory of electricity. But he believed that wiring the world for near-instantaneous communication would bring about peace on Earth. In 1866, after enduring over a decade of global scorn, catastrophic failures, staggering losses, and brushes with death, he would finally lay his great cable, ushering in the global information age. From acclaimed author James M. Tabor, Lightning Beneath the Sea is an unforgettable tale of radical vision, unwavering determination, and triumph against overwhelming odds that transformed life on Earth forever.

In a propulsive narrative, Tabor tells how Field swiftly assembled an all-star scientific dream team that included telegraph legend Samuel F. B. Morse; a young Lord Kelvin, called the da Vinci of his day; Michael Faraday, the father of electrical engineering; and legendary philanthropist Peter Cooper. Together they battled epic storms, freak accidents, corporate sabotage, the enmity of Abraham Lincoln, and the hubris of the project’s original chief electrician—an eccentric who insisted on being called Wildman—while racing two rival efforts to establish telegraphic communications between continents. When it was finally done, Field’s cable lay up to 2.5 miles deep under the ocean, and the London Daily News announced: “Time and space seem literally annihilated.” The cable’s legacy can be traced today in the hundreds of descendants that still carry 98 percent of the world’s information through a “world undersea web.”…(More)”

Paper by Marcia Langton, Robert McLellan, Jennifer Fewster, and Kristen Smith: “The Framework for the Governance of Indigenous Data has been developed to guide ethical, inclusive, and culturally grounded data practices across the Humanities, Arts, Social Sciences, and Indigenous Research Data Commons (HASS and Indigenous RDC).

It responds to long-standing calls from Aboriginal and Torres Strait Islander communities for greater control over data that affects their lives, lands, cultures, and futures. The Framework is the result of extensive consultation, co-design, and collaboration with Indigenous data custodians, researchers, institutions, and policymakers. It is intended to be adopted across all HASS and Indigenous RDC activities.

This Framework affirms that Indigenous data governance is essential to Indigenous self-determination. It defines Indigenous data as information generated by, about, or for Aboriginal and Torres Strait Islander peoples, encompassing cultural, ecological, linguistic, genealogical, and community-generated knowledge. The Framework recognises that all data – whether held by governments, institutions, or communities – has implications for Indigenous peoples and must be governed in ways that respect Indigenous rights, laws, and relational worldviews.

The Framework is structured around three interrelated components: a Governance Model, a set of Governance Guidelines, and a Monitoring and Accountability structure. The Governance Model identifies 5 foundational elements:

- Recognition of Indigenous assets

- Partnership

- Building capabilities

- Self-determination

- Inclusive data ecosystem.

These elements underpin the Framework’s guidelines and practices, which provide actionable strategies for institutions and communities to embed Indigenous governance across the data lifecycle.

The Framework is grounded in the CARE Principles for Indigenous Data Governance (Collective Benefit, Authority to Control, Responsibility, and Ethics) and complements the FAIR Principles (Findable, Accessible, Interoperable, and Reusable)…(More)”.

Article by Ashley Farley: “When the Gates Foundation’s Open Access Policy was established, in 2015, the aim was to shift the ecosystem so that open access became the norm. In the years that followed, while open access output did increase, the overall ecosystem shifted in the wrong direction: costs rose sharply and commercial capture intensified.

In 2024, as the foundation reflected on what about the policy was working and what could be improved, we had two choices: to stay the course with a policy that wasn’t improving the broader ecosystem or to cease support for unsustainable practices. We chose to confront the systemic issues in open access publishing head-on, updating our policy to require Gates-funded research be shared as preprints. This change enabled the foundation to divest from restrictive article processing charges (APCs) and redirect resources toward more equitable and sustainable open access models….As APCs became the dominant model for open access publishing, they crowded out other, more equitable approaches and made it difficult for alternative models to scale. The foundation’s decision to stop paying APCs will inevitably put pressure on the broader publishing ecosystem, with both positive and challenging effects.

While the intention is to build a more equitable open access system, we recognize that authors without access to alternative funding for APCs will face tougher publishing choices in the short term. But our data shows that grantees continue to find ways to pay for APCs. And the new policy intentionally preserves author choice. Authors are not required to choose journals that levy APCs. As the various financial streams that flow to publishers start to consolidate, that flow could better reflect the values of funders and institutions, creating pressure that encourages publishers—especially large commercial ones—to reduce their prices. Although publishing carries real costs, charging researchers thousands of dollars to make their work openly available is rooted in inequitable, colonial structures…(More)”.

Article by Daniela Paolotti and Stefaan Verhulst: “The emergence of Hantavirus cases appeared, at first glance, to be a localized public health incident. Yet, when viewed alongside recurring Ebola outbreaks, growing concerns about avian influenza, and other zoonotic disease threats, these events highlight a broader lesson from COVID-19: our vulnerability to infectious disease outbreaks is shaped not only by the pathogens themselves but also by the preparedness of our data ecosystems and our ability to translate information into timely action. In particular, responsible access to non-traditional data sources -including mobility data, online search behavior, social media activity, transaction records, crowdsourced information, and other digital traces- has become critical in complementing traditional surveillance systems. These data sources can provide earlier, more timely, and more granular insights into emerging risks, helping decision-makers detect outbreaks sooner, understand behavioral dynamics, target interventions more effectively, and strengthen overall preparedness and response efforts

As such, these recent outbreaks provide a useful lens through which to revisit some of the insights of our recent research on non-traditional data and pandemic preparedness. In the below, we share key insights from our analysis of the COVID-19 response, reflect on their continued relevance in the context of the current Hantavirus and Ebola outbreaks, and outline three recommendations to help ensure that the barriers, delays, and missed opportunities of past crises are not repeated again.

This article is not intended to be a comprehensive assessment of either (on-going) outbreak -such an undertaking will require more extensive epidemiological, operational, and governance analysis (which we recommend). Rather, our objective is to highlight a number of emerging warning signs and recurring challenges that deserve more serious attention…(More)”.

PressRelease: “The European Commission today presented the European Technological Sovereignty Package, a set of measures to strengthen Europe’s capacity in semiconductors, artificial intelligence (AI), cloud and open source.

Commission President, Ursula von der Leyen said: “We cannot afford to depend on others for the technologies that keep our hospitals running, our energy grids stable and our services secure. This is about protecting our citizens, defending our interests and making our own choices. Europe has the talent, the research excellence, the industrial base and the Single Market. Together, we must turn these strengths into technological sovereignty.”

The package includes two legislative proposals – the Chips Act 2.0 and the Cloud and AI Development Act – as well as the Open Source Strategy and a Strategic Roadmap for Digitalisation and AI in Energy.

Together, these measures support Europe’s ambition to become an AI continent, strengthen its digital autonomy and help build a more sustainable digital future. They will help widen choice in core technologies for EU businesses, citizens and public administrations.

The move comes as Europe remains heavily dependent on suppliers outside the European Union for core digital technologies and as demand for computing capacity rises sharply with the spread of AI. It is designed to reduce structural dependencies and make sure Europe can develop, deploy and secure the technologies Europeans rely on. It signals a major shift in the EU’s approach to technology…(More)”.

Paper by Ankit Bhutani, Guillermo Ordoñez & Laura Veldkamp: “Data assets are increasingly vital in modern economies, yet macroeconomic measurement is not well-adapted to capturing their value. Part of the problem is that data is an intangible asset: investments in data are missed in national accounts, and depreciation losses are missed in firms’ balance sheets. Another part, unique to data, is that it serves as a means of payment in the modern economy: consumption bartered for data is also omitted from national accounts. We propose an output-based approach to measure the missing value of data. We treat data as an asset, measure its volume based on the quality of firms’ revenue forecasts, and endogenously determine its depreciation. We then capitalize the data value and explore what the measured GDP would be if the data were treated and transacted similarly to a physical asset. Our findings suggest that the aggregate value of data is about 1.5% of GDP….(More)”.