Blog by Andrew J. Zahuranec and Stefaan G. Verhulst: “The novel coronavirus disease (COVID-19) is a global health crisis the likes of which the modern world has never seen. Amid calls to action from the United Nations Secretary-General, the World Health Organization, and many national governments, there has been a proliferation of initiatives using data to address some facet of the pandemic. In March, The GovLab at NYU put out its own call to action, which identifies key steps organizations and decision-makers can take to build the data infrastructure needed to tackle pandemics. This call has been signed by over 400 data leaders from around the world in the public and private sector and in civil society.

But questions remain as to how many of these initiatives are useful for decision-makers. While The GovLab’s living repository contains over 160 data collaboratives, data competitions, and other innovative work, many of these examples take a data supply-side approach to the COVID-19 response. Given the urgency of the situation, some organizations create projects that align with the available data instead of trying to understand what insights those responding to the crisis actually want, including issues that may not be directly related to public health.

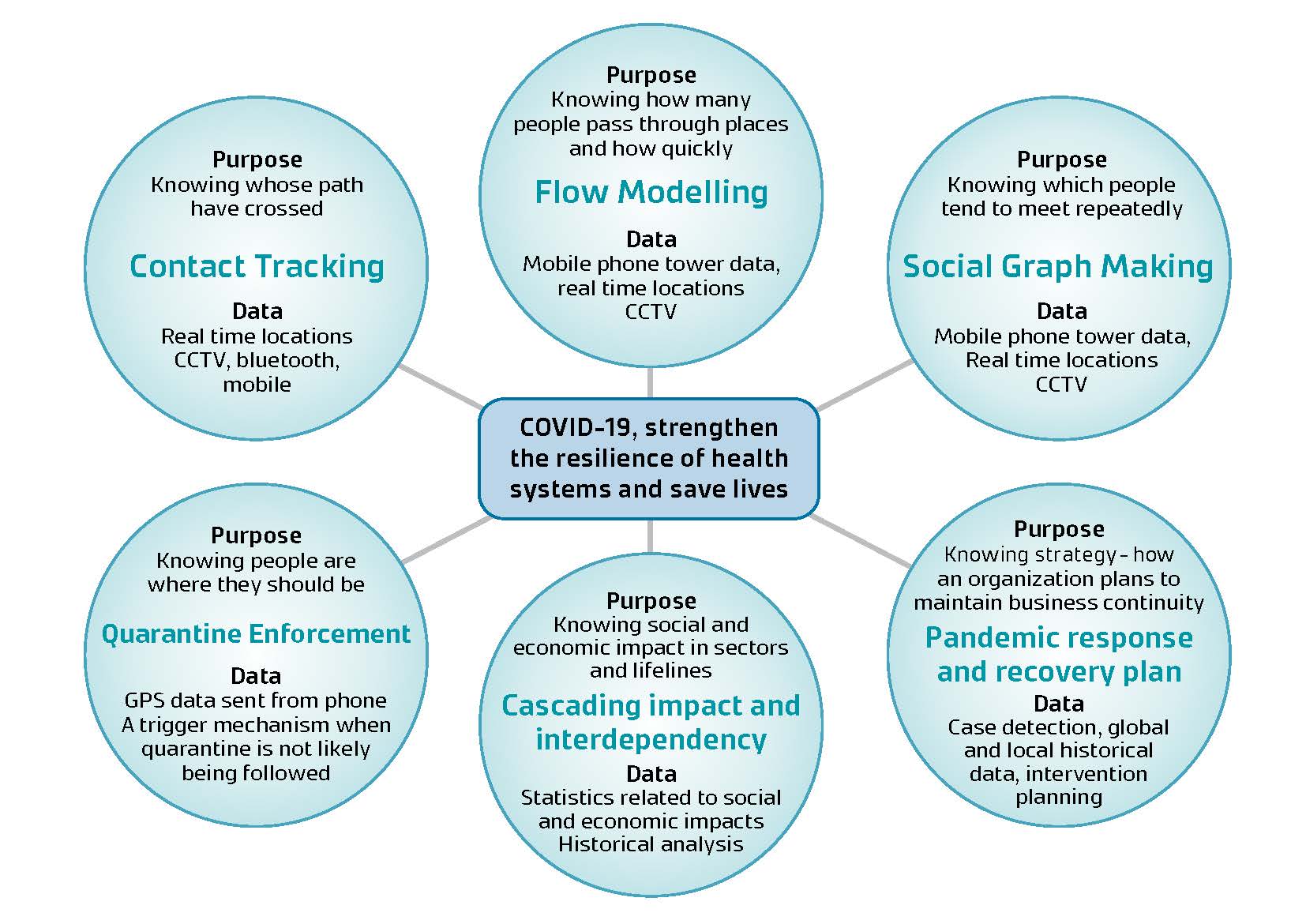

We need to identify and ask better questions to use data effectively in the current crisis. Part of that work means understanding what topics can be addressed through enhanced data access and analysis.

Using The GovLab’s rapid-research methodology, we’ve compiled a list of 12 topic areas related to COVID-19 where data and analysis is needed. …(More)”.