Paper by Michael Curtotti, Wayne Weibel, Eric McCreath, Nicolas Ceynowa, Sara Frug, and Tom R Bruce: “This paper sits at the intersection of citizen access to law, legal informatics and plain language. The paper reports the results of a joint project of the Cornell University Legal Information Institute and the Australian National University which collected thousands of crowdsourced assessments of the readability of law through the Cornell LII site. The aim of the project is to enhance accuracy in the prediction of the readability of legal sentences. The study requested readers on legislative pages of the LII site to rate passages from the United States Code and the Code of Federal Regulations and other texts for readability and other characteristics. The research provides insight into who uses legal rules and how they do so. The study enables conclusions to be drawn as to the current readability of law and spread of readability among legal rules. The research is intended to enable the creation of a dataset of legal rules labelled by human judges as to readability. Such a dataset, in combination with machine learning, will assist in identifying factors in legal language which impede readability and access for citizens. As far as we are aware, this research is the largest ever study of readability and usability of legal language and the first research which has applied crowdsourcing to such an investigation. The research is an example of the possibilities open for enhancing access to law through engagement of end users in the online legal publishing environment for enhancement of legal accessibility and through collaboration between legal publishers and researchers….(More)”

Big Data for Social Good

Introduction to a Special Issue of the Journal “Big Data” by Catlett Charlie and Ghani Rayid: “…organizations focused on social good are realizing the potential as well but face several challenges as they seek to become more data-driven. The biggest challenge they face is a paucity of examples and case studies on how data can be used for social good. This special issue of Big Data is targeted at tackling that challenge and focuses on highlighting some exciting and impactful examples of work that uses data for social good. The special issue is just one example of the recent surge in such efforts by the data science community. …

This special issue solicited case studies and problem statements that would either highlight (1) the use of data to solve a social problem or (2) social challenges that need data-driven solutions. From roughly 20 submissions, we selected 5 articles that exemplify this type of work. These cover five broad application areas: international development, healthcare, democracy and government, human rights, and crime prevention.

“Understanding Democracy and Development Traps Using a Data-Driven Approach” (Ranganathan et al.) details a data-driven model between democracy, cultural values, and socioeconomic indicators to identify a model of two types of “traps” that hinder the development of democracy. They use historical data to detect causal factors and make predictions about the time expected for a given country to overcome these traps.

“Targeting Villages for Rural Development Using Satellite Image Analysis” (Varshney et al.) discusses two case studies that use data and machine learning techniques for international economic development—solar-powered microgrids in rural India and targeting financial aid to villages in sub-Saharan Africa. In the process, the authors stress the importance of understanding the characteristics and provenance of the data and the criticality of incorporating local “on the ground” expertise.

In “Human Rights Event Detection from Heterogeneous Social Media Graphs,” Chen and Neil describe efficient and scalable techniques to use social media in order to detect emerging patterns in human rights events. They test their approach on recent events in Mexico and show that they can accurately detect relevant human rights–related tweets prior to international news sources, and in some cases, prior to local news reports, which could potentially lead to more timely, targeted, and effective advocacy by relevant human rights groups.

“Finding Patterns with a Rotten Core: Data Mining for Crime Series with Core Sets” (Wang et al.) describes a case study with the Cambridge Police Department, using a subspace clustering method to analyze the department’s full housebreak database, which contains detailed information from thousands of crimes from over a decade. They find that the method allows human crime analysts to handle vast amounts of data and provides new insights into true patterns of crime committed in Cambridge…..(More)

Data scientists rejoice! There’s an online marketplace selling algorithms from academics

SiliconRepublic: “Algorithmia, an online marketplace that connects computer science researchers’ algorithms with developers who may have uses for them, has exited its private beta.

Algorithms are essential to our online experience. Google uses them to determine which search results are the most relevant. Facebook uses them to decide what should appear in your news feed. Netflix uses them to make movie recommendations.

Founded in 2013, Algorithmia could be described as an app store for algorithms, with over 800 of them available in its library. These algorithms provide the means of completing various tasks in the fields of machine learning, audio and visual processing, and computer vision.

Algorithmia found a way to monetise algorithms by creating a platform where academics can share their creations and charge a royalty fee per use, while developers and data scientists can request specific algorithms in return for a monetary reward. One such suggestion is for ‘punctuation prediction’, which would insert correct punctuation and capitalisation in speech-to-text translation.

While it’s not the first algorithm marketplace online, Algorithmia will accept and sell any type of algorithm and host them on its servers. What this means is that developers need only add a simple piece of code to their software in order to send a query to Algorithmia’s servers, so the algorithm itself doesn’t have to be integrated in its entirety….

Computer science researchers can spend years developing algorithms, only for them to be published in a scientific journal never to be read by software engineers.

Algorithmia intends to create a community space where academics and engineers can meet to discuss and refine these algorithms for practical use. A voting and commenting system on the site will allow users to engage and even share insights on how contributions can be improved.

To that end, Algorithmia’s ultimate goal is to advance the development of algorithms as well as their discovery and use….(More)”

Encyclopedia of Social Network Analysis and Mining

“The Encyclopedia of Social Network Analysis and Mining (ESNAM) is the first major reference work to integrate fundamental concepts and research directions in the areas of social networks and applications to data mining. While ESNAM reflects the state-of-the-art in social network research, the field had its start in the 1930s when fundamental issues in social network research were broadly defined. These communities were limited to relatively small numbers of nodes (actors) and links. More recently the advent of electronic communication, and in particular on-line communities, have created social networks of hitherto unimaginable sizes. People around the world are directly or indirectly connected by popular social networks established using web-based platforms rather than by physical proximity.

Reflecting the interdisciplinary nature of this unique field, the essential contributions of diverse disciplines, from computer science, mathematics, and statistics to sociology and behavioral science, are described among the 300 authoritative yet highly readable entries. Students will find a world of information and insight behind the familiar façade of the social networks in which they participate. Researchers and practitioners will benefit from a comprehensive perspective on the methodologies for analysis of constructed networks, and the data mining and machine learning techniques that have proved attractive for sophisticated knowledge discovery in complex applications. Also addressed is the application of social network methodologies to other domains, such as web networks and biological networks….(More)”

Cultures of Code

Brian Hayes in the American Scientist: “Kim studies parallel algorithms, designed for computers with thousands of processors. Chris builds computer simulations of fluids in motion, such as ocean currents. Dana creates software for visualizing geographic data. These three people have much in common. Computing is an essential part of their professional lives; they all spend time writing, testing, and debugging computer programs. They probably rely on many of the same tools, such as software for editing program text. If you were to look over their shoulders as they worked on their code, you might not be able to tell who was who.

Despite the similarities, however, Kim, Chris, and Dana were trained in different disciplines, and they belong to different intellectual traditions and communities. Kim, the parallel algorithms specialist, is a professor in a university department of computer science. Chris, the fluids modeler, also lives in the academic world, but she is a physicist by training; sometimes she describes herself as a computational scientist (which is not the same thing as a computer scientist). Dana has been programming since junior high school but didn’t study computing in college; at the startup company where he works, his title is software developer.

These factional divisions run deeper than mere specializations. Kim, Chris, and Dana belong to different professional societies, go to different conferences, read different publications; their paths seldom cross. They represent different cultures. The resulting Balkanization of computing seems unwise and unhealthy, a recipe for reinventing wheels and making the same mistake three times over. Calls for unification go back at least 45 years, but the estrangement continues. As a student and admirer of all three fields, I find the standoff deeply frustrating.

Certain areas of computation are going through a period of extraordinary vigor and innovation. Machine learning, data analysis, and programming for the web have all made huge strides. Problems that stumped earlier generations, such as image recognition, finally seem to be yielding to new efforts. The successes have drawn more young people into the field; suddenly, everyone is “learning to code.” I am cheered by (and I cheer for) all these events, but I also want to whisper a question: Will the wave of excitement ever reach other corners of the computing universe?…

What’s the difference between computer science, computational science, and software development?…(More)”

Computer-based personality judgments are more accurate than those made by humans

Paper by Wu Youyou, Michal Kosinski and David Stillwell at PNAS (Proceedings of the National Academy of Sciences): “Judging others’ personalities is an essential skill in successful social living, as personality is a key driver behind people’s interactions, behaviors, and emotions. Although accurate personality judgments stem from social-cognitive skills, developments in machine learning show that computer models can also make valid judgments. This study compares the accuracy of human and computer-based personality judgments, using a sample of 86,220 volunteers who completed a 100-item personality questionnaire. We show that (i) computer predictions based on a generic digital footprint (Facebook Likes) are more accurate (r = 0.56) than those made by the participants’ Facebook friends using a personality questionnaire (r = 0.49); (ii) computer models show higher interjudge agreement; and (iii) computer personality judgments have higher external validity when predicting life outcomes such as substance use, political attitudes, and physical health; for some outcomes, they even outperform the self-rated personality scores. Computers outpacing humans in personality judgment presents significant opportunities and challenges in the areas of psychological assessment, marketing, and privacy…(More)”.

Big Data, Machine Learning, and the Social Sciences: Fairness, Accountability, and Transparency

at Medium: “…So why, then, does granular, social data make people uncomfortable? Well, ultimately—and at the risk of stating the obvious—it’s because data of this sort brings up issues regarding ethics, privacy, bias, fairness, and inclusion. In turn, these issues make people uncomfortable because, at least as the popular narrative goes, these are new issues that fall outside the expertise of those those aggregating and analyzing big data. But the thing is, these issues aren’t actually new. Sure, they may be new to computer scientists and software engineers, but they’re not new to social scientists.

This is why I think the world of big data and those working in it — ranging from the machine learning researchers developing new analysis tools all the way up to the end-users and decision-makers in government and industry — can learn something from computational social science….

So, if technology companies and government organizations — the biggest players in the big data game — are going to take issues like bias, fairness, and inclusion seriously, they need to hire social scientists — the people with the best training in thinking about important societal issues. Moreover, it’s important that this hiring is done not just in a token, “hire one social scientist for every hundred computer scientists” kind of way, but in a serious, “creating interdisciplinary teams” kind of kind of way.

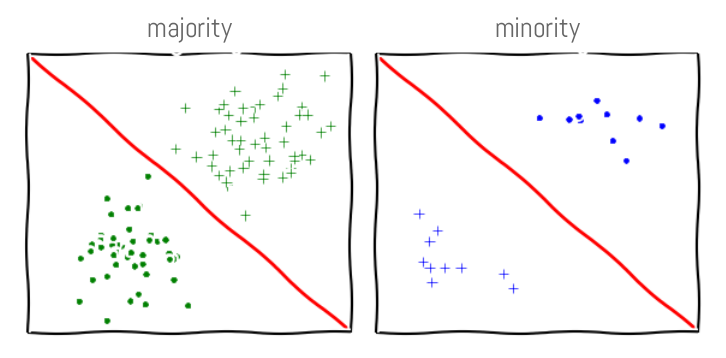

While preparing for my talk, I read an article by Moritz Hardt, entitled “How Big Data is Unfair.” In this article, Moritz notes that even in supposedly large data sets, there is always proportionally less data available about minorities. Moreover, statistical patterns that hold for the majority may be invalid for a given minority group. He gives, as an example, the task of classifying user names as “real” or “fake.” In one culture — comprising the majority of the training data — real names might be short and common, while in another they might be long and unique. As a result, the classic machine learning objective of “good performance on average,” may actually be detrimental to those in the minority group….

As an alternative, I would advocate prioritizing vital social questions over data availability — an approach more common in the social sciences. Moreover, if we’re prioritizing social questions, perhaps we should take this as an opportunity to prioritize those questions explicitly related to minorities and bias, fairness, and inclusion. Of course, putting questions first — especially questions about minorities, for whom there may not be much available data — means that we’ll need to go beyond standard convenience data sets and general-purpose “hammer” methods. Instead we’ll need to think hard about how best to instrument data aggregation and curation mechanisms that, when combined with precise, targeted models and tools, are capable of elucidating fine-grained, hard-to-see patterns….(More).”

Code of Conduct: Cyber Crowdsourcing for Good

Patrick Meier at iRevolution: “There is currently no unified code of conduct for digital crowdsourcing efforts in the development, humanitarian or human rights space. As such, we propose the following principles (displayed below) as a way to catalyze a conversation on these issues and to improve and/or expand this Code of Conduct as appropriate.

This initial draft was put together by Kate Chapman, Brooke Simons and myself. The link above points to this open, editable Google Doc. So please feel free to contribute your thoughts by inserting comments where appropriate. Thank you.

An organization that launches a digital crowdsourcing project must:

- Provide clear volunteer guidelines on how to participate in the project so that volunteers are able to contribute meaningfully.

- Test their crowdsourcing platform prior to any project or pilot to ensure that the system will not crash due to obvious bugs.

- Disclose the purpose of the project, exactly which entities will be using and/or have access to the resulting data, to what end exactly, over what period of time and what the expected impact of the project is likely to be.

- Disclose whether volunteer contributions to the project will or may be used as training data in subsequent machine learning research

- ….

An organization that launches a digital crowdsourcing project should:

- Share as much of the resulting data with volunteers as possible without violating data privacy or the principle of Do No Harm.

- Enable volunteers to opt out of having their tasks contribute to subsequent machine learning research. Provide digital volunteers with the option of having their contributions withheld from subsequent machine learning studies

- … “

Training Students to Extract Value from Big Data

The nation’s ability to make use of data depends heavily on the availability of a workforce that is properly trained and ready to tackle high-need areas. Training students to be capable in exploiting big data requires experience with statistical analysis, machine learning, and computational infrastructure that permits the real problems associated with massive data to be revealed and, ultimately, addressed. Analysis of big data requires cross-disciplinary skills, including the ability to make modeling decisions while balancing trade-offs between optimization and approximation, all while being attentive to useful metrics and system robustness. To develop those skills in students, it is important to identify whom to teach, that is, the educational background, experience, and characteristics of a prospective data-science student; what to teach, that is, the technical and practical content that should be taught to the student; and how to teach, that is, the structure and organization of a data-science program.

Training Students to Extract Value from Big Data summarizes a workshop convened in April 2014 by the National Research Council’s Committee on Applied and Theoretical Statistics to explore how best to train students to use big data. The workshop explored the need for training and curricula and coursework that should be included. One impetus for the workshop was the current fragmented view of what is meant by analysis of big data, data analytics, or data science. New graduate programs are introduced regularly, and they have their own notions of what is meant by those terms and, most important, of what students need to know to be proficient in data-intensive work. This report provides a variety of perspectives about those elements and about their integration into courses and curricula…”

Big data is better data

Ted Talk by Kenneth Cukier, Data Editor of The Economist: “Self-driving cars were just the start. What’s the future of big data-driven technology and design? In a thrilling science talk, Kenneth Cukier looks at what’s next for machine learning — and human knowledge…”